Blog

Let Claude Run Agent Infrastructure

Part 10 of a series on Claude API features for builders Over the last nine posts I’ve worked through the Claude API from the ground up: how to make Claude think, act, speak precisely, see, cite its sources, manage its memory, operate cheaply, develop expertise, and run autonomously. In Part 9, the Agent SDK removed the need to build your own agent loop. But you still had to run the infrastructure: the containers, the sandbox, the tool execution environment. If you wanted a coding agent that could safely run bash commands and write files, you needed somewhere for it to do that, and you needed to manage that somewhere yourself.

April 16, 2026

From API Calls to Autonomous Agents

Part 9 of a series on Claude API features for builders If you’ve been following this series, you’ve been building with the raw Messages API. You’ve learned to construct tool definitions, manage the agentic loop, handle stop_reason values, and pass tool results back to Claude. All of that works, and for many use cases it’s exactly the right approach. But if you’ve built anything with more than a couple of tools, you know the boilerplate adds up. The loop management, the conversation state, the error handling, the tool execution routing, it’s a lot of plumbing before you get to the interesting part of your application.

April 13, 2026

Creating Technical Content

I’ve been creating technical content in one form or another for over 20 years. Blog posts on the AWS and Azure blogs, a book on Microsoft automation, presentations at conferences, workshops delivered to customers in cities across three continents, and more recently a 10-part series on Claude’s API for my own site. Some of it had real, measurable impact. Some of it disappeared into the void. The difference was almost never the quality of the technical material itself.

April 10, 2026

Teaching AI New Tricks

Part 8 of a series on Claude API features for builders In Part 2 I covered how Tool Use lets Claude call functions and take actions. Tools are powerful, but they solve a specific problem: giving Claude the ability to do things. There’s a related but different problem that tools don’t solve: giving Claude the ability to know how to do things well. Think about the difference between giving someone a hammer and teaching them carpentry. A tool definition tells Claude “you can call this function.” A Skill tells Claude “here’s how to approach this entire category of work, including the best practices, the common pitfalls, the templates, and the scripts that make the output professional.”

April 9, 2026

On AI Roles

I’ve been looking at the AI job market recently to get a sense of whats out there, so I’ve spent more time than I’d like scrolling through job posts trying to figure out what companies actually want when they post an AI role. It’s been… educational. In the last few months I’ve looked at roles titled Solutions Architect, Applied AI Engineer, AI Engineer, Forward Deployed Engineer, ML Engineer, AI Evangelist, and various manager-level versions of all of the above. Some of these are clearly different jobs. Some of them are the same job with different names. And some of them are different jobs with the same name, which is the most dangerous combination if you’re either applying for one or trying to hire for one.

April 8, 2026

Making AI Cheaper and Faster

Part 7 of a series on Claude API features for builders Over the past six posts I’ve covered how to make Claude think, act, speak precisely, see, cite its sources, and manage its memory. All powerful capabilities. All of which cost money. If you’re building a prototype or processing a handful of requests, the per-token cost of the Claude API is trivial. But the moment you start scaling, whether it’s processing thousands of documents, running evaluations across a test suite, or powering a customer-facing product with real traffic, costs and latency become the two things you think about constantly.

April 6, 2026

On the Gap

There were two moments that sent me down the startup founder path, and neither happened at my desk. The first one I was sitting on a tram going to work one day, watching tech Twitter posts, and one was a demo video from Google showing an early version of Gemini generating an app’s UI on the fly. A dude typed into a text box and the system generated Flutter code in real time, rendering cards, buttons, images, the whole interface, dynamically based on what the user asked for. It wasn’t a chatbot. It wasn’t a static screen. It was an application that built itself around the user’s intent.

April 6, 2026

How Much Can AI Remember?

Part 6 of a series on Claude API features for builders Every feature I’ve covered in this series so far, thinking deeply, using tools, structured outputs, understanding documents, citing sources, they all share something in common. They all consume tokens. And tokens live inside something called the context window. If you’ve ever had a long conversation with Claude and noticed it starting to “forget” things you mentioned earlier, or if you’ve tried to feed it a huge document and hit a wall, you’ve bumped into context window limits. Understanding how the context window works, and the new tools available for managing it, is one of the most practically important things you can know as someone building with the API.

April 2, 2026

On Growing People

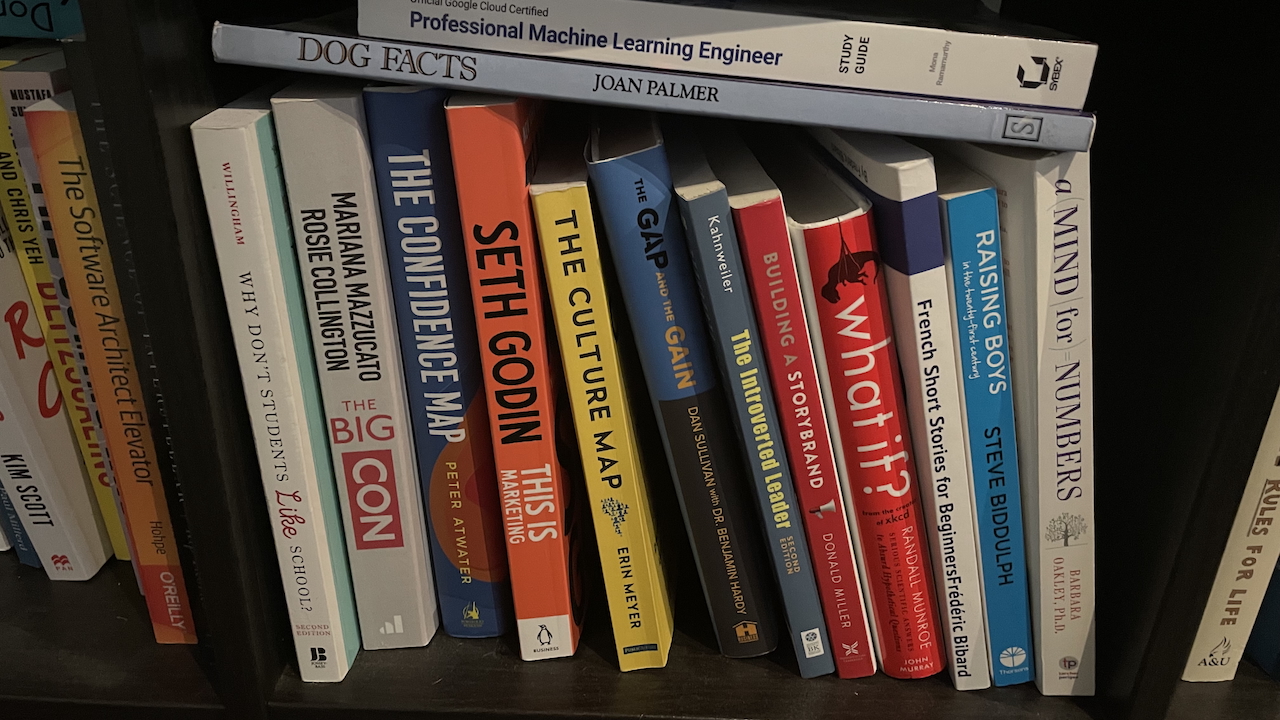

I have a shelf full of books about leadership. Radical Candor, The Manager’s Path, The Culture Map, The Introverted Leader. I’ve done the training courses, watched the videos, sat in the workshops where you role-play difficult conversations with a colleague who’s trying not to laugh. All of it useful. I’m glad I did it. But none of it, not a single page or module or framework, prepared me for the actual moment you’re sitting across from someone and need to say something they don’t want to hear.

April 2, 2026

How AI Can Cite Its Sources

Part 5 of a series on Claude API features for builders There’s a question that comes up in every conversation about putting AI into production. It doesn’t matter who’s asking the question, everyone is wary of hallucinations (or confabulations, data-driven fabrications, factual inconsistencies, misinformation, or what ever else people choose to call it). The question is always some version of: “But how do I know it’s not making things up?”

March 31, 2026